🚀 A CloudSEK se torna a primeira empresa de segurança cibernética de origem indiana a receber investimentos da Estado dos EUA fundo

Leia mais

A arquitetura de chaves de API do Google, originalmente projetada para serviços públicos desconhecidos, como Maps, Firebase e YouTube, nunca foi concebida para servir como autenticação para sistemas confidenciais de IA. Por mais de uma década, o Google disse explicitamente aos desenvolvedores que as chaves da API do formato Iza... eram seguros para incorporar códigos do lado do cliente e pacotes de aplicativos móveis. Eles eram identificadores públicos, não segredos.

Isso mudou com a chegada de Gêmeos. Agora, eles são credenciais ativas de um dos sistemas de IA mais poderosos do mundo.

Em fevereiro de 2026, a Truffle Security publicou uma pesquisa revelando que quando a API Gemini (Generative Language API) é ativada em um projeto do Google Cloud, todas as chaves de API existentes nesse projeto ganham acesso silenciosamente aos endpoints Gemini, sem aviso, sem notificação e sem diálogo de confirmação. Desenvolvedores que seguiram a orientação do próprio Google para incorporar chaves do Maps ou do Firebase em seus aplicativos agora, sem saber, possuem credenciais ativas de um poderoso serviço de IA.

O BeVigil da CloudSEK — o primeiro mecanismo de busca de segurança de aplicativos móveis do mundo — examinou os 10.000 principais aplicativos Android por número de instalações para avaliar a superfície de ataque dessa vulnerabilidade. As descobertas são alarmantes.

Seja Vigil é o mecanismo de busca de segurança de aplicativos móveis do CloudSEK, indexando mais de um milhão de aplicativos Android e escaneando-os continuamente em busca de segredos codificados, APIs mal configuradas, credenciais expostas e outras vulnerabilidades de segurança. Pesquisadores, desenvolvedores e empresas de segurança usam o BeVigil para identificar riscos em aplicativos móveis antes que eles possam ser explorados por agentes de ameaças.

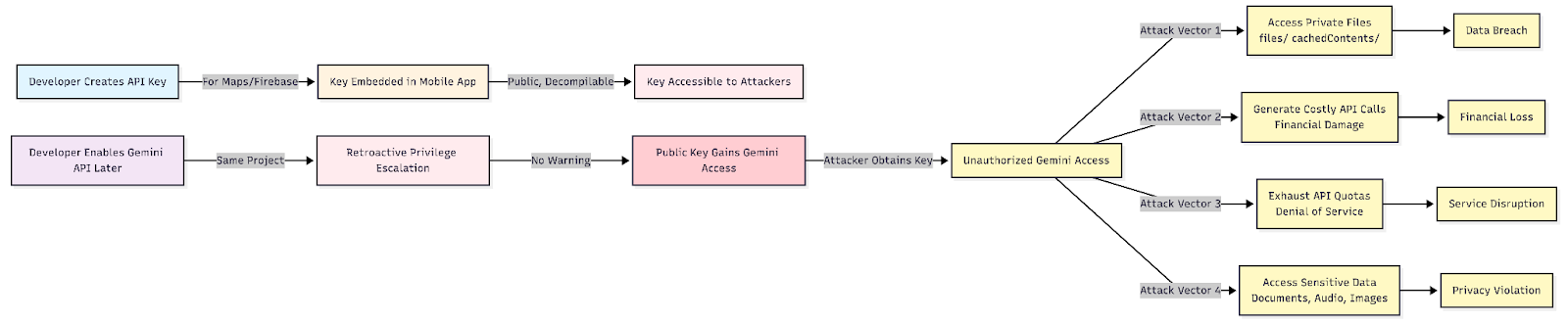

O Google usa um único formato de chave de API (Iza...) em casos de uso fundamentalmente diferentes: identificação pública de projetos e autenticação confidencial. O principal problema, conforme documentado pela Truffle Security, é um aumento retroativo de privilégios:

Um atacante que obtém uma dessas chaves pode:

A equipe de pesquisa da CloudSEK examinou os 10.000 principais aplicativos Android classificados por número de instalações. Usando regras automatizadas de detecção de segredos, identificamos as chaves de API do Google do Iza... formato codificado em pacotes de aplicativos e, em seguida, verifiquei cada chave na API Gemini para confirmar o acesso ao vivo à API Generative Language.

Os resultados: 32 chaves de API do Google ativas em 22 aplicativos exclusivos, com uma base de instalação combinada superior a 500 milhões de usuários.

Tabela 1: Aplicativos vulneráveis com chaves de API do Google expostas

Nota: Todas as chaves identificadas foram divulgadas de forma responsável aos respectivos desenvolvedores de aplicativos. As chaves de API foram editadas na versão publicada.

Entre os 22 aplicativos vulneráveis, o BeVigil confirmou a exposição ativa de dados no ELSA Speak: AI Learn & Speak English, uma plataforma de aprendizado de inglês com mais de 10 milhões de instalações.

Usando a chave exposta do pacote de aplicativos do ELSA Speak, os pesquisadores do CloudSEK consultaram o endpoint da API Gemini Files e receberam uma resposta de 200 OK listando os arquivos ativos armazenados no espaço de trabalho Gemini do projeto. Os dados expostos incluíram:

Isso confirma que o conteúdo de áudio enviado pelo usuário - potencialmente contendo gravações de fala usadas para treinamento de pronúncia em inglês com inteligência artificial - estava acessível a qualquer pessoa que possuísse a chave de API codificada encontrada no pacote de aplicativos disponível ao público da ELSA.

As 31 chaves restantes retornaram armazenamentos de arquivos vazios ({}) ao consultar o /arquivos/ endpoint, o que significa que nenhum arquivo estava armazenado atualmente nesses projetos Gemini no momento do teste. No entanto, as chaves permanecem como credenciais válidas do Gemini e podem ser usadas para incorrer em cobranças de API, cotas de exaustão ou acessar dados enviados no futuro.

O ecossistema móvel apresenta uma superfície de ataque distinta e subestimada para essa classe de vulnerabilidade:

A exposição de chaves de API do Google habilitadas para Gemini em aplicativos móveis cria um risco multivetorial:

Para usuários finais

Para desenvolvedores e organizações

As consequências financeiras das chaves de API Gemini expostas não são teóricas. Os casos a seguir, cada um relatado publicamente em fóruns como os fóruns comunitários do Reddit e do Google Cloud, ilustram a rapidez com que o acesso não autorizado pode se transformar em perdas que ameaçam a empresa.

Um desenvolvedor de apenas 24 anos que administra um aplicativo educacional baseado no Firebase descobriu em primeira mão como a arquitetura de chaves legada do Google pode transformar a ativação rotineira da API de IA em uma catástrofe. Seu projeto do Google Cloud existe há anos com chaves de API irrestritas e geradas automaticamente — do tipo que o próprio Google instruiu os desenvolvedores a incorporar nenhum código voltado para o cliente. Quando ele habilitou a API Gemini em seu projeto por meio do AI Studio para testes internos, ele não recebeu nenhum aviso de que suas chaves irrestritas existentes haviam acabado de obter acesso silencioso a caros endpoints de inferência de IA.

Um atacante encontrou sua chave antiga — que sempre foi “pública” e antes inofensiva — e foi usada para enviar spam à inferência de Gêmeos a partir de uma botnet. O desenvolvedor tinha alertas de orçamento configurados e agiu dentro de dez minutos após receber um alerta de $40. Ele revogou todas as chaves e desativou a API Gemini imediatamente. Não foi suficiente.

O console de faturamento do Google Cloud tem um atraso de geração de relatórios de aproximadamente 30 horas. Quando o painel foi atualizado no dia seguinte, o alerta de $40 foi traduzido em uma fatura de $15.400. Seis dias depois de registrar um caso de suporte, ele continuou recebendo apenas respostas automáticas. A conta estava programada para ser suspensa quando a cobrança falhou no 1º dia do mês — o que teria sido derrubado toda para sua startup dependente do Firebase. Essa é uma falha estrutural: o Google mesclou o conceito de “chaves públicas” com segredos de IA do lado do servidor, e habilitar o Gemini deveria ter acionado uma restrição de chave obrigatória ou forçado a criar uma nova chave com escopo definido.

Uma pequena empresa no Japão estava usando a API Gemini exclusivamente para criar um punhado de ferramentas internas de produtividade — não um produto voltado para o público. Sua implementação foi protegida por restrições de acesso IP em nível de firewall e todos os repositórios de origem eram privados. Apesar dessas precauções, sua chave de API foi obtida e explorada de alguma forma.

A atividade anormal começou por volta das 4:00 da manhã JST em 12 de março de 2025. Quando a equipe percebeu, durante uma verificação de rotina no final do dia, as cobranças já haviam ultrapassado aproximadamente 7 milhões de ienes (cerca de 44.000 dólares). A empresa imediatamente pausou a API e entrou em contato com o Google. Apesar dessas ações emergenciais, as cobranças continuaram se acumulando até o final do dia seguinte, com o total final atingindo aproximadamente 20,36 milhões de JPY - cerca de $128.000 USD.

O Google negou sua solicitação inicial de ajuste. No momento da reportagem, a empresa estava se comunicando com o Google e reunindo evidências, enfrentando um risco real de falência. O fato de as cobranças continuarem sendo acumuladas mesmo após a pausa da API destaca uma lacuna crítica: o fluxo de fiscalização e cobrança do Google não é interrompido instantaneamente após a revogação da chave, deixando os desenvolvedores expostos durante a janela entre ação e efeito.

Uma equipe de desenvolvimento de três pessoas no México, com um gasto mensal normal de USD 180 no Google Cloud, encontrou sua chave de API comprometida entre 11 e 12 de fevereiro de 2025. Em 48 horas, a chave roubada gerou $82.314 em cobranças — 455 vezes o uso mensal típico — quase inteiramente com chamadas de geração de imagens e texto do Gemini 2.0 Pro.

A equipe respondeu imediatamente: excluindo a chave comprometida, desativando as APIs Gemini, alternando todas as credenciais, ativando a autenticação de dois fatores e bloqueando as permissões do IAM. Apesar dessas respostas didáticas, o representante do Google inicialmente citou o Modelo de Responsabilidade Compartilhada da plataforma como base para responsabilizar a empresa — uma posição que, se aplicada, teria excedido todo o saldo bancário da empresa. A equipe apresentou um relatório de crime cibernético ao FBI e observou que o momento coincidiu com um padrão mais amplo de empresas chinesas de IA que visam a infraestrutura de IA dos EUA para destilar os resultados do modelo.

Esse caso ressalta a ausência sistêmica de barreiras financeiras básicas: sem interrupção automática em múltiplos de uso anômalos, sem confirmação forçada de picos extremos de gastos, sem congelamento temporário pendente de análise humana e sem limites de gastos padrão por API. Um salto de $180 por mês para $82.000 em 48 horas não é uma variabilidade normal — é um abuso inequívoco — mas a plataforma do Google não tinha nenhum mecanismo automatizado para evitá-la.

A equipe de pesquisa da CloudSEK seguiu as práticas de divulgação responsáveis ao longo desta investigação:

Os pesquisadores do CloudSEK não realizaram nenhuma operação de gravação ou modificação de dados usando as chaves descobertas. O endpoint da API Gemini Files foi consultado apenas para confirmar se a API Generative Language estava acessível e para avaliar o escopo de qualquer exposição de dados, de acordo com os padrões éticos de pesquisa de segurança.

A proliferação de chaves de API do Google em pacotes de aplicativos móveis é um fenômeno bem documentado na comunidade de pesquisa de segurança móvel. A novidade — e o que torna essa descoberta particularmente urgente — é que uma classe de chaves antes de ser considerada identificadores públicos inofensivos foi silenciosamente elevada às credenciais confidenciais de IA.

A análise do BeVigil dos 10.000 principais aplicativos Android demonstra que esse não é um risco teórico. Centenas de milhões de usuários são atendidos por aplicativos com chaves codificadas que agora fornecem acesso não autorizado à infraestrutura do IA Gemini do Google.

À medida que os recursos de IA estão cada vez mais integrados à infraestrutura de nuvem existente, a superfície de ataque para credenciais antigas se expande de uma forma que nem os desenvolvedores nem as equipes de segurança previram. A BeVigil continua monitorando o ecossistema de aplicativos móveis para esse e outros padrões emergentes de exposição de credenciais.

Desenvolvedores: escaneie seu aplicativo gratuitamente em bevigil.com. Se você acredita que seu aplicativo pode ser afetado, altere suas chaves de API do Google imediatamente e restrinja-as somente aos serviços que seu aplicativo exige.