🚀 أصبحت CloudSek أول شركة للأمن السيبراني من أصل هندي تتلقى استثمارات منها ولاية أمريكية صندوق

اقرأ المزيد

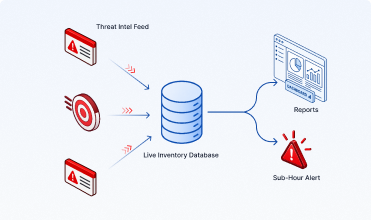

يستخدم محرك الذكاء الاصطناعي السياقي من CloudSek معلومات التهديدات الإلكترونية ومراقبة سطح الهجوم للتنبؤ بشكل استباقي ومنع موظفي المؤسسة وعملائها من التصيد الاحتيالي وتسرب البيانات وتهديدات الويب المظلم والعلامة التجارية وتهديدات الأشعة تحت الحمراء.

AiVigil عبارة عن منصة مراقبة سطح هجوم أصلية تعمل بالذكاء الاصطناعي وتقوم باستمرار باكتشاف ومراقبة وتأمين البنية التحتية للذكاء الاصطناعي المكشوفة وخوادم MCP وبيانات اعتماد الذكاء الاصطناعي المسربة وقواعد بيانات المتجهات وسير عمل الوكلاء وظلال الذكاء الاصطناعي عبر الإنترنت.

.avif)

.avif)

.png)

.png)

.avif)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.avif)

.png)

.png)

.png)

.avif)

.webp)

.png)

تثق الشركات العالمية وشركات Fortune 500 في CloudSEK لتعزيز وضعها في مجال الأمن السيبراني.

تساعد AiVigil المؤسسات على اكتشاف ومراقبة وتأمين أسطح هجمات الذكاء الاصطناعي عبر النماذج والمطالبات وواجهات برمجة التطبيقات وعمليات تكامل الذكاء الاصطناعي. تم تصميمه لبيئات الذكاء الاصطناعي الحديثة مع الكشف المستمر عن تهديدات الذكاء الاصطناعي والمراقبة الأمنية للذكاء الاصطناعي في الوقت الفعلي.

.png)

اكتشف خوادم MCP،

مخازن المتجهات، سير عمل الوكيل، نماذج الذكاء الاصطناعي.

تشغيل التنبيهات وإعداد التقارير والاستجابة

من وجهة نظر أصول الذكاء الاصطناعي الموحدة.

.png)

سجل التعرض باستخدام وكالة الوكيل وحالة المصادقة ونصف قطر الانفجار والإشارات الحية.

تكتشف AiVigil باستمرار أسطح هجمات الذكاء الاصطناعي وتحللها وتراقبها عبر النماذج والمطالبات وواجهات برمجة التطبيقات وعمليات تكامل الذكاء الاصطناعي. تم تصميمه لتوفير اكتشاف التهديدات بالذكاء الاصطناعي في الوقت الفعلي، ورؤية مخاطر الذكاء الاصطناعي، والمراقبة الأمنية المستمرة للذكاء الاصطناعي.

AIVigil continuously discovers, analyzes, and monitors AI attack surfaces across models, prompts, APIs, and AI integrations. Built to deliver real-time AI threat detection, AI risk visibility, and continuous AI security monitoring.

Discover MCP servers, vector stores in, agentic workflows, AI Models.

Function: Finding the Shadow AI.

MCP-Specific Scanning, Agentic Workflow Analysis, Supply Chain Scanning, Active AI Red Teaming.

Function: Contextualizing the Vulnerability.

Real-Time Threat Intel Pipeline, Unified Asset Inventory (AI BOM), Automated Reporting & Remediation.

Function: Operationalizing the Posture.

يمكنك رؤية سطح هجوم الذكاء الاصطناعي بالكامل ومراقبته وتأمينه من منصة واحدة.

أحدث الأدلة المفيدة من CloudSek.

إدارة سطح هجوم الذكاء الاصطناعي هي الاكتشاف المستمر للمخاطر الأمنية ومراقبتها والحد منها عبر البنية التحتية للذكاء الاصطناعي للمؤسسة - بما في ذلك تطبيقات LLM وواجهات برمجة تطبيقات الذكاء الاصطناعي وخوادم MCP ومخازن المتجهات وسير عمل الوكلاء ونقاط نهاية الاستدلال النموذجي. وهي تحدد نواقل الوصول الأولية لطبقة الذكاء الاصطناعي - مثل الحقن الفوري وإساءة استخدام النموذج وواجهات برمجة تطبيقات الذكاء الاصطناعي المكشوفة - قبل أن يتمكن المهاجمون من استغلالها وربطها بمسار هجوم قابل للتنفيذ.

الحقن الفوري هو أسلوب هجوم حيث تتجاوز المدخلات الضارة تعليمات نظام نموذج الذكاء الاصطناعي لاستخراج البيانات أو تنفيذ الإجراءات غير المصرح بها أو تجاوز ضوابط السلامة. هناك نوعان رئيسيان: الحقن الفوري المباشر (المدخلات التي يوفرها المستخدم والتي تتجاوز مطالبات النظام) والحقن الفوري غير المباشر (المحتوى الضار المضمن في المستندات أو مصادر البيانات الخارجية التي يعالجها نموذج الذكاء الاصطناعي). تراقب AiVigil باستمرار نقاط نهاية LLM وواجهات برمجة تطبيقات الذكاء الاصطناعي وسير عمل الوكيل لكلا النوعين - مع تحديد نقاط ضعف الحقن الفوري قبل استغلالها.

يكتشف AiVigil كل مكون من سطح هجوم الذكاء الاصطناعي الخاص بك، بما في ذلك: خوادم MCP (بروتوكول السياق النموذجي) ومخازن المتجهات وقواعد البيانات المضمنة وسير عمل الوكلاء ووكلاء الذكاء الاصطناعي ونقاط نهاية نماذج اللغة الكبيرة (LLM) والتطبيقات المدمجة بالذكاء الاصطناعي وواجهات برمجة التطبيقات وسجلات النماذج ومجموعات GPU وخدمات الاستدلال بالذكاء الاصطناعي وخطوط بيانات التدريب. الاكتشاف مستمر ويتضمن عمليات نشر الظل للذكاء الاصطناعي - أنظمة الذكاء الاصطناعي التي تعمل دون وعي فريق الأمان.

تم تصميم ماسحات SAST وDAST والثغرات الأمنية التقليدية لمعالجة العيوب على مستوى التعليمات البرمجية في البرامج التقليدية. لا يمكنهم اكتشاف الحقن الفوري أو إساءة استخدام النموذج أو اختطاف العوامل أو التعرض لقاعدة بيانات المتجهات لأن هذه المخاطر تعمل في نموذج الذكاء الاصطناعي وطبقة الاستدلال - وليس طبقة الكود. تم تصميم AiVigil خصيصًا لسطح هجوم الذكاء الاصطناعي، حيث يراقب متجهات الوصول الأولية الفريدة التي تقدمها أنظمة الذكاء الاصطناعي.

تحدد AiVigil متجهات الوصول الأولية لطبقة الذكاء الاصطناعي وتغذيها في Nexus AI، طبقة ذكاء مسار الهجوم في CloudSek. يربط Nexus AI بين مخاطر الذكاء الاصطناعي وإشارات التهديد الخارجية من xviGil (الويب المظلم ونشاط الجهات الفاعلة في مجال التهديد) ومخاطر سلسلة التوريد الخاصة بطرف ثالث من Svigil لإنتاج رسم بياني للهجوم تم التحقق منه - يوضح بالضبط كيف سيقوم المهاجمون بربط ثغرة أمنية بالذكاء الاصطناعي ببيانات اعتماد مسربة أو تعرض البائع لمسار هجوم حقيقي وقابل للتنفيذ.

تم تصميم AiVigil من أجل CISOS ورؤساء أمن الذكاء الاصطناعي وفرق العمليات الأمنية وفرق هندسة AI/ML في المؤسسات التي تنشر أنظمة الذكاء الاصطناعي على نطاق واسع. إنه يمنح قادة الأمن الرؤية للإجابة على الأسئلة التي تطرحها المجالس والهيئات التنظيمية الآن: ما هي أنظمة الذكاء الاصطناعي التي نديرها، وما هي ناقلات الوصول الأولية، وكيف نراقب وندير مخاطر طبقة الذكاء الاصطناعي؟